Learning Objectives

At a high level, through this seminar class students will have an idea of what distributed representation learning is about, what the state-of-the-art are, and what the open problems are. The class will select several topics of interests and curate a list of recent important papers for each topic for reading, presentations and discussions. Along the way, students will review milestone papers in distributed representation learning of language data, learning about important learning techniques and neural architectures for self-supervised language learning, multi-task learning, transfer learning, and meta leanring, as well as recent advances on interpretable, compositional neurla models that incorporate structured priors and models that can jointly induce the latent language structures. The hope is that by the end of this class students will have good understand about latest techqniues developed for representation learning in NLP, and can develp practical algorithms and implement systems for solving some NLP applications.

Contents

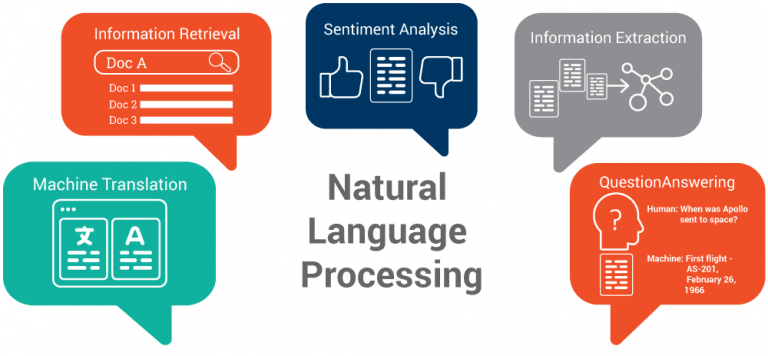

Deep learning basics (methods of training DNNs, regularization in deep learning, representation learning, structured probabilistic models, monte carlo methods, confronting the partition function, approximate inference, deep generative models). Multi-task learning, domain adaptation, transfer learning. Few-shot learning, meta-learning and their application in NLP. Techniques for model interpretation, adversarial training, interpretable model architectures. Question answering and reading comprehension, commonsense reasoning & inference, multi-model learning (NLP + vision).